Here’s the uncomfortable truth: every time you ask ChatGPT or Claude a question, your words travel to external servers. For most companies, that’s fine. For legal firms, defense contractors, healthcare systems, and companies sitting on trade secrets? It’s a nightmare.

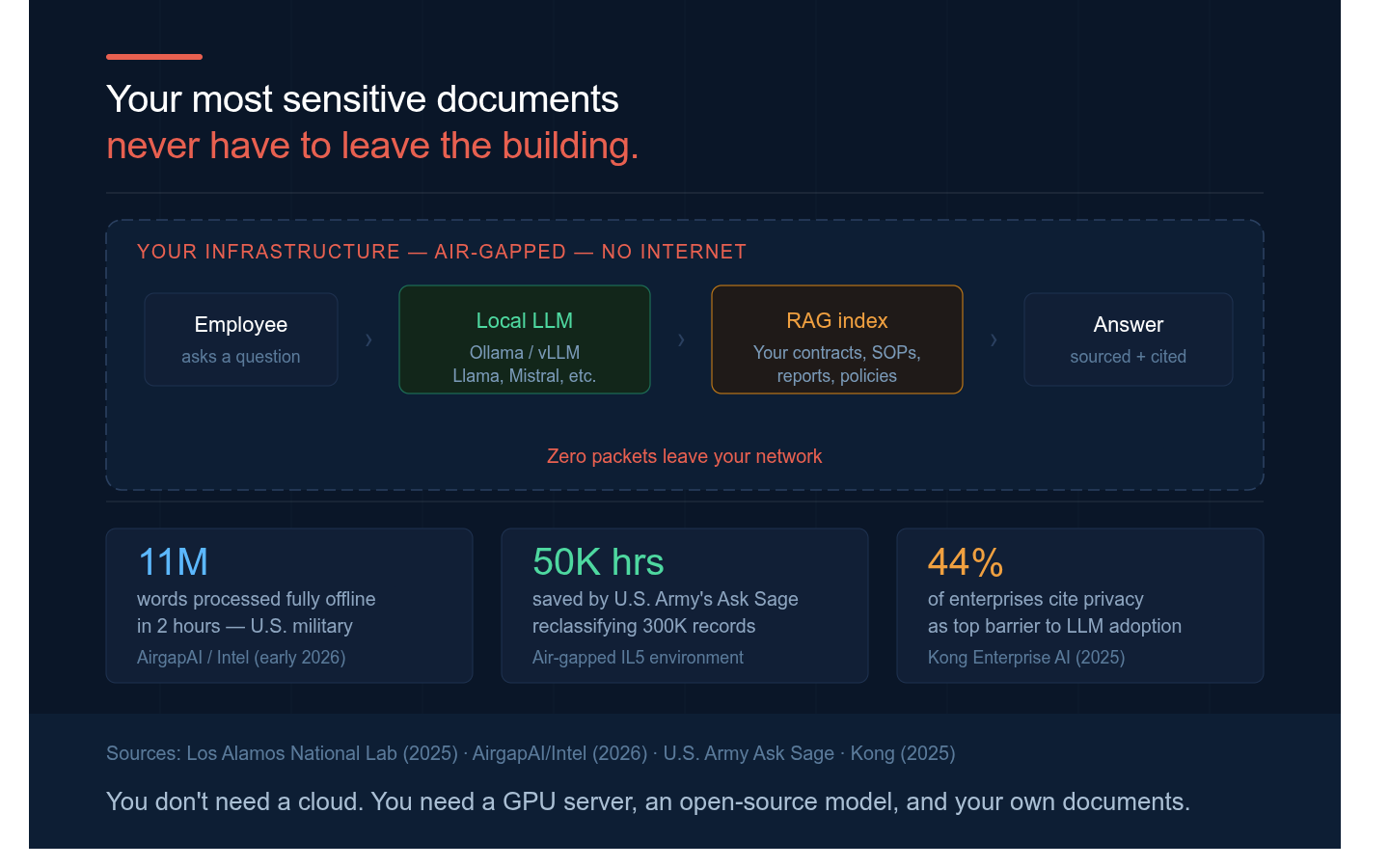

A new survey found that 44% of enterprises cite data privacy as the top barrier to LLM adoption. The math is simple: powerful AI tools are useless if you can’t feed them your most sensitive documents without breaking compliance or exposing confidential information.

The solution exists, but most teams don’t know about it yet. You can run a large language model directly on your own hardware, completely air-gapped from the internet, with zero data leaving your building. No external API calls. No cloud middleman. Just you, your documents, and your own infrastructure.

Open-source models like Llama 2, Mistral, and others have matured enough to handle real work. Deploy them on-premises using tools like Ollama or LangChain, and you get a private AI chatbot that understands your documents, learns your domain, and keeps everything inside your firewall.

The tradeoff? You manage the infrastructure and lose some of the polish you get from ChatGPT’s massive servers. But for industries where confidentiality isn’t negotiable, that’s a worthwhile exchange.

The future of enterprise AI isn’t cloud-first. It’s privacy-first. And that future is already here.